Multimodal AI

Multimodality will a key feature of AI for the AEC industry.

One of the unique things about the AEC industry is that our work usually spans across both the physical world (e.g. constructions sites) and a world of documentation (e.g. drawings, reports, specifications). The ability to understand both worlds and translate information between them is a key to success.

Adding to this complexity is the fact that our documentation takes multiple forms. Some is mostly text-based (reports, specifications) and some is more visual (photos, drawings, presentations).

There’s a word for this concept: multimodality.

Per ChatGPT…

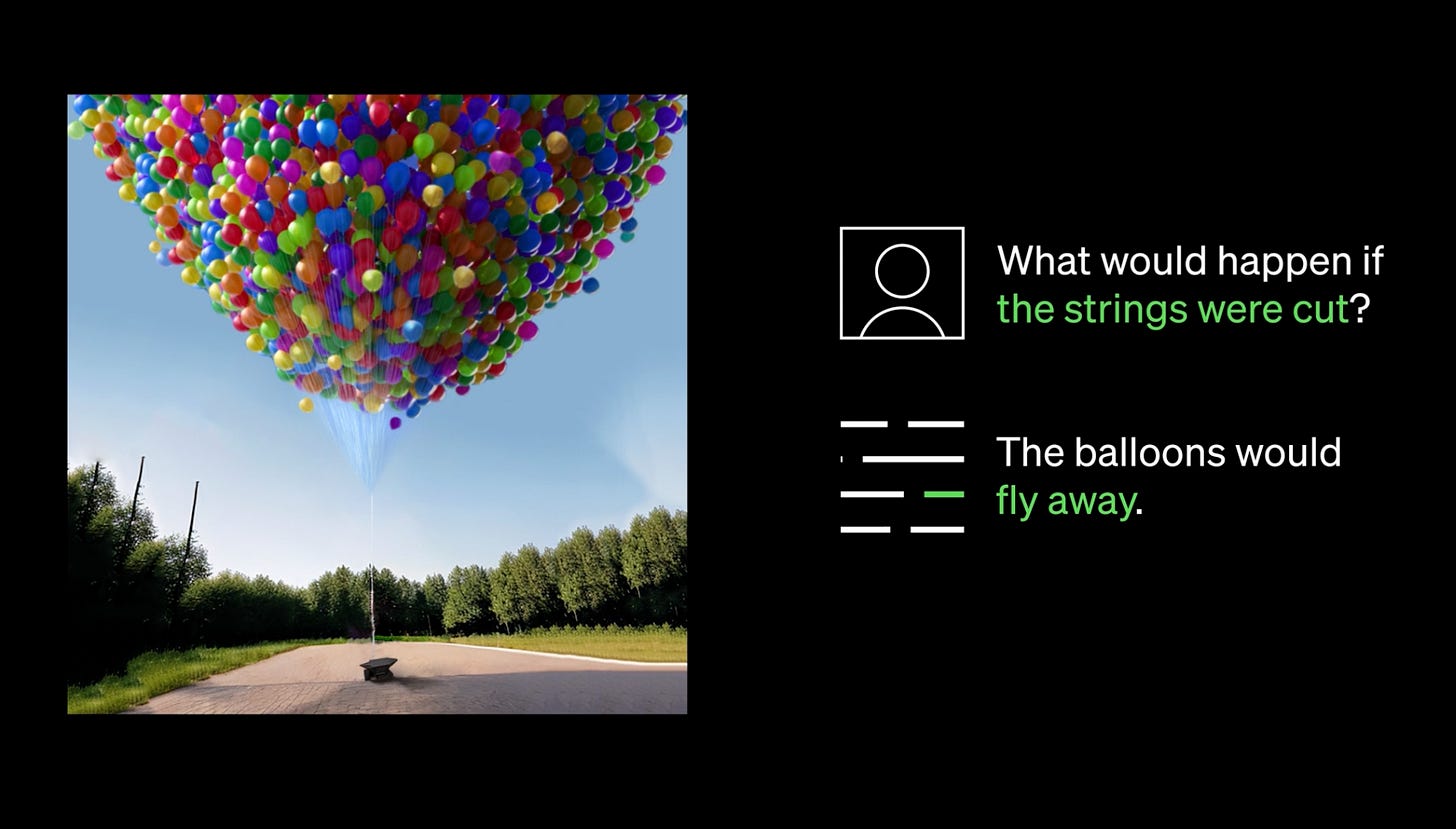

The term "multimodality" refers to the presence or use of multiple modes or channels to communicate or process information. In various contexts, this can involve the combination of different forms of communication, such as text, images, audio, video, and gestures. Multimodality allows for richer, more diverse, and more effective ways to convey or understand complex ideas, as it enables individuals to access and interpret information through multiple sensory channels.

In the context of AI, multimodality refers to the capability of an AI system to process, analyze, and synthesize these various forms of communication and content.

Many AI systems currently focus on one modality. For example, AI damage detectors (e.g. T2D2) “look” at images and use pattern recognition to identify issues like cracks and spalls. ChatGPT (for now—see below) works with text, but can’t accept images.

You can see how acquiring multimodal capabilities will be key to AI systems advancing to the point of being able to pull off something like I described in this earlier post.

Which brings me to this week’s news…

OpenAI released GPT-4, a new multimodal model that can work with about 8x the text volume of the previous ChatGPT model and understand the content of images.

Anthropic, founded by folks that left OpenAI, released its own chatbot named Claude.

Microsoft confirmed that Bing Chat runs on OpenAI’s GPT-4.

Google announced some forthcoming generative AI tools for Workspace.

LinkedIn is adding AI tools to help write profile content.

Robin AI has released an AI contract editor.

Meta’s LLM was leaked to the public and sparked a debate about open vs. closed source for AI.

…there’s more, of course, but those are some highlights. The field is moving super fast, with major updates every few days (or hours). Stay tuned!